Performance and Evaluation Office (PEO) - Program Evaluation

This Public Health Reports article highlights the path CDC has taken to foster the use of evaluation. Access this valuable resource to learn more about using evaluation to inform program improvements.

What is program evaluation?

Evaluation: A systematic method for collecting, analyzing, and using data to examine the effectiveness and efficiency of programs and, as importantly, to contribute to continuous program improvement.

Program: Any set of related activities undertaken to achieve an intended outcome; any organized public health action. At CDC, program is defined broadly to include policies; interventions; environmental, systems, and media initiatives; and other efforts. It also encompasses preparedness efforts as well as research, capacity, and infrastructure efforts.

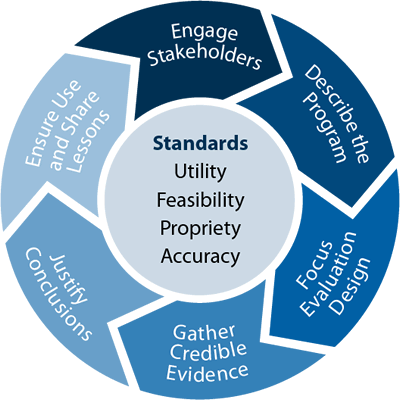

At CDC, effective program evaluation is a systematic way to improve and account for public health actions.

Why evaluate?

- CDC has a deep and long-standing commitment to the use of data for decision making, as well as the responsibility to describe the outcomes achieved with its public health dollars.

- Strong program evaluation can help us identify our best investments as well as determine how to establish and sustain them as optimal practice.

- The goal is to increase the use of evaluation data for continuous program improvement Agency-wide.

We have to have a healthy obsession with impact. To always be asking ourselves what is the real impact of our work on improving health?

What's the difference between evaluation, research, and monitoring?

- Evaluation: Purpose is to determine effectiveness of a specific program or model and understand why a program may or may not be working. Goal is to improve programs.

- Research: Purpose is theory testing and to produce generalizable knowledge. Goal is to contribute to knowledge base.

- Monitoring: Purpose is to track implementation progress through periodic data collection. Goal is to provide early indications of progress (or lack thereof).

- There are also similarities:

- Data collection methods and analyses are often similar between research and evaluation.

- Monitoring and evaluation (M&E) measure and assess performance to help improve performance and achieve results.

Research seeks to prove, evaluation seeks to improve.

E-mail: cdceval@cdc.gov