|

|

|

|

|

|

|

| ||||||||||

|

|

|

|

|

|

|

||||

| ||||||||||

|

|

|

|

|

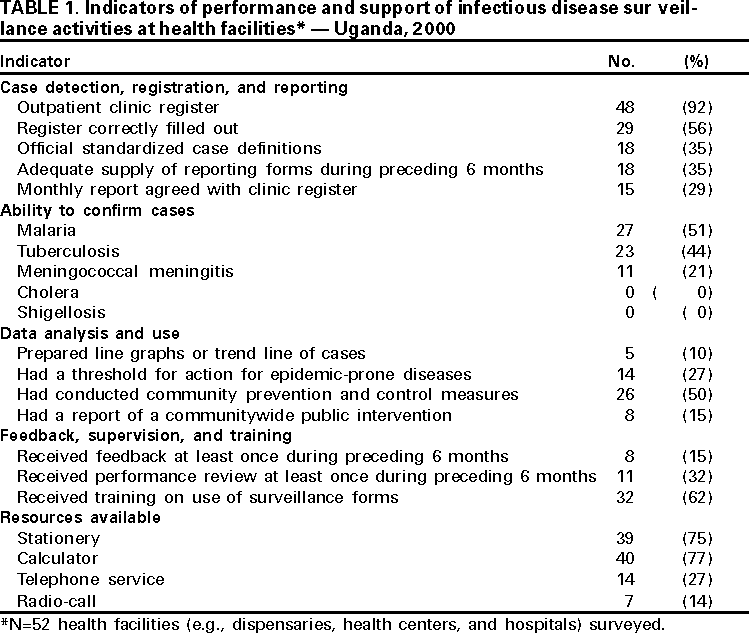

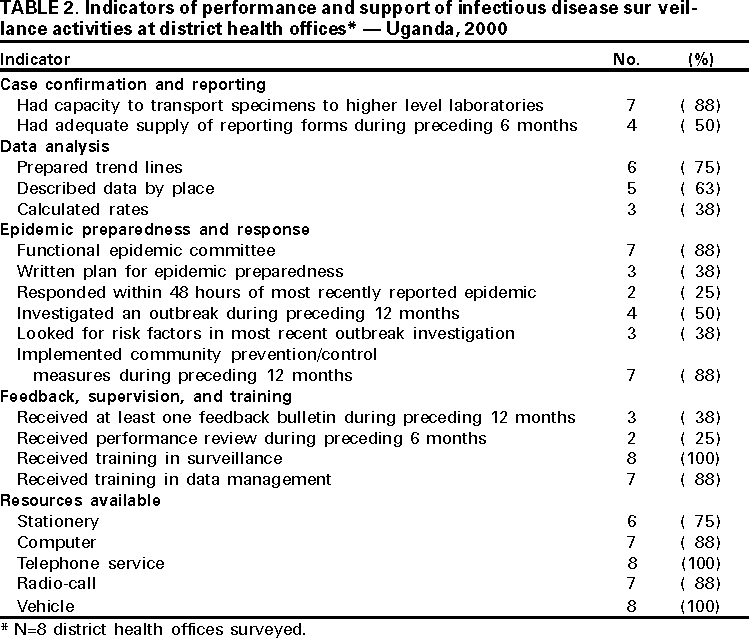

Persons using assistive technology might not be able to fully access information in this file. For assistance, please send e-mail to: mmwrq@cdc.gov. Type 508 Accommodation and the title of the report in the subject line of e-mail. Assessment of Infectious Disease Surveillance --- Uganda, 2000In 1998, member states of the African region of the World Health Organization (WHO-AFRO) adopted the integrated disease surveillance (IDS) strategy to strengthen national infectious disease surveillance systems (1). The first step of the IDS strategy is to assess infectious disease surveillance systems. This report describes the results of the assessment of these systems of the Uganda Ministry of Health (UMoH) and indicates that additional efforts are needed to develop the basic elements of an effective surveillance system. In February 2000, UMoH, Makerere University Institute of Public Health, WHO, and CDC performed a cross-sectional survey to determine the performance and support of infectious disease surveillance systems conducted by UMoH at health facilities (e.g., dispensaries, health centers, and hospitals) and district health offices. The six systems assessed were the Health Management Information System, the Weekly Epidemiological Report, Tuberculosis/Leprosy, HIV/AIDS, Polio/Acute Flaccid Paralysis, and Guinea Worm Eradication. The assessment covered 52 (3%) of 1639 health facilities and eight (18%) of the 45 district health offices (two in each of the four geographic zones of Uganda). The districts were selected by UMoH on the basis of timeliness of reporting. Three or four health facilities were selected randomly within each district. Performance was measured using surveillance indicators (i.e., detection, registration, and confirmation of case-patients; reporting; data analysis and use; and epidemic preparedness and response) and infrastructural and managerial support (i.e., feedback, performance reviews, training, and resources) of surveillance activities using a protocol developed by WHO-AFRO with support from CDC (2). Health FacilitiesOutpatient clinic registers were present in 48 (92%) of the 52 health facilities and were filled out correctly in 29 (56%) (Table 1). Eighteen (35%) health facilities had the official standardized case definition booklet and an adequate supply of reporting forms during the 6 months before the assessment. The monthly report for the number of case-patients seen at a health facility for a selected disease (e.g., malaria or measles) was in agreement with the clinic register in 15 (29%) of the health facilities. Of the 52 health facilities, 27 (51%) had the laboratory capacity to confirm a diagnosis of malaria, 23 (44%) to confirm tuberculosis, and 11 (21%) to confirm meningococcal meningitis; none of the facilities had the capacity to confirm shigellosis or cholera. Five (10%) health facilities analyzed data for trends, and 14 (27%) had thresholds for action in response to surveillance data for epidemic-prone diseases. Communitywide prevention and control measures had been conducted at 26 (50%) of the health facilities during the 12 months before the assessment, and reports of this intervention were available in eight (15%). During the 6 months before the assessment, most surveillance activities conducted by health facilities had neither received a performance review (68%) nor received feedback (85%) from the district or national levels. Respondents at 32 (62%) health facilities had received training in the use of surveillance forms. Most health facilities had calculators (77%) and stationery (75%), and few had telephones (27%) or radio-call facilities (14%). District Health OfficesSeven of the eight districts had the capacity to transport specimens to a higher-level laboratory for confirmation (Table 2). Four had an adequate supply of monthly reporting forms during the 6 months before the assessment. Six districts prepared trend lines of cases and described data by place, and three calculated disease rates. Seven districts had a functional epidemic preparedness committee, three had a written plan for epidemic preparedness, and two responded within 48 hours of notification of the most recent epidemic in their district. Health personnel in four of the districts had investigated an outbreak during the 12 months before the assessment. Seven districts had implemented community prevention and control measures during the 12 months before the assessment. Three districts had received a surveillance bulletin during the 12 months before the assessment, and two had received a performance review during the preceding 6 months. All districts had personnel trained in surveillance (including for acute flaccid paralysis surveillance), and seven had personnel trained in data management. All districts had vehicles and telephone services; seven had computers and radio-call facilities. Reported by: A Opio, MD, J Kamugisha, MD, J Wanyana, MD, M Mugaga, E Mukoyo, MD, A Talisuna, MD, J Rwakimali, MD, J Musinguzi, MD, W Kaboyo, DVM, C Mugero, MD, W Komakech, MD, G Bagambisa, MD, J Namboze, MD, N Mbona, MS, N Mulumba, R Seruyange, MD, N Bakyaita, MD, S Ndyanabangi, MD, P Mugyenyi, MD, R Odeke, MD, R Magola, G Guma, Ministry of Health; F Wabwire-Mangen, MD, D Ndungutse, MD, M Lamunu, DVM, L Lukwago, MPH, Institute of Public Health, Makerere Univ, Kampala, Uganda. N Ndayimirije, MD, W Alemu, MD, World Health Organization, Regional Office for Africa, Harare, Zimbabwe. S Chungong, MD, World Health Organization, Geneva, Switzerland. Div of International Health, Epidemiology Program Office; and an EIS Officer, CDC. Editorial Note:The findings in this report indicate that health facilities in Uganda lack standard case definitions and capacity to confirm priority diseases. District health offices had adequate resources but lacked epidemic preparedness and rapid response capacity. Neither health facilities nor district health offices received regular performance reviews. Public health surveillance includes the ongoing systematic collection, analysis, and interpretation of health data with the subsequent transformation of the data into information to direct public health action (3,4). At health facilities, infectious disease surveillance systems require standardized case definitions, adequate laboratory support for disease confirmation, routine methods for reporting and feedback, and ongoing data analysis to detect and facilitate response to diseases. Health facilities also require support from higher levels for performance reviews, training, and the provision of resources for surveillance. WHO-AFRO and CDC are working with UMoH to build the capacity of the districts---the primary level of public health response---to collect and transport specimens for confirmation, analyze and use data for action, prepare for and respond to epidemics, and provide support to health facilities in Uganda. The findings in this report are subject to at least two limitations. First, the findings are subject to interviewer bias because some of the interviewers knew about the strengths and weaknesses of the surveillance systems; however, this was offset by the presence of independent interviewers from CDC and WHO. Second, the sampling methods used to select the districts does not allow for a generalization of the results to the entire country. To improve infectious disease surveillance in Uganda, standardized case definitions must be distributed to health facilities and health-care workers trained in their use. In addition, regular supervision should be instituted to ensure proper use of case definitions, registration, and reporting veracity; regular supervision improves the willingness of health-care workers to participate in public health activities (5). UMoH also is considering initiating a regular national surveillance bulletin to promote the use of surveillance data. To respond rapidly to infectious diseases and other acute health problems, district health teams need timely, high-quality information that can be provided only by staff members with necessary skills and motivation. References

Table 1  Return to top. Table 2  Return to top. Disclaimer All MMWR HTML versions of articles are electronic conversions from ASCII text into HTML. This conversion may have resulted in character translation or format errors in the HTML version. Users should not rely on this HTML document, but are referred to the electronic PDF version and/or the original MMWR paper copy for the official text, figures, and tables. An original paper copy of this issue can be obtained from the Superintendent of Documents, U.S. Government Printing Office (GPO), Washington, DC 20402-9371; telephone: (202) 512-1800. Contact GPO for current prices. **Questions or messages regarding errors in formatting should be addressed to mmwrq@cdc.gov.Page converted: 8/3/2000 |

|||||||||

This page last reviewed 5/2/01

|